With Great Data Comes Great Responsibility: Good Practice in Statistics and Why it Matters

Statistical analysis allows the user to contextualise raw data into knowledge, separating significant outcomes from background noise. This allows policy makers and scientists to make decisions on the subsequent path of action, having been informed by the statistical analysis.

What are statistics? Statistical analysis allows the user to contextualise raw data into knowledge, separating significant outcomes from background noise. This allows policy makers and scientists to make decisions on the subsequent path of action, having been informed by the statistical analysis.

However… Irresponsible statistical analysis can have catastrophic effects on scientific progress, whether results falsely described as significant serve as a research red herring, wasting time and resources on a line of enquiry that is not viable, or it could be that something is overlooked, and a path of research is left unexplored due to another less efficient route being described as significant, thus other lines of exploration may be deemed unnecessary.

What are irresponsible statistics?

As it is such a powerful tool, misuse of statistical analysis can often occur, misappropriating data to emphasise incorrect elements of the story the data is telling. These issues can arise (both intentionally and unintentionally) in a variety of ways:

- Intentional manipulation of the data, such as fishing for correlating data without a null-hypothesis to test. This does not allow for the distinction between correlation and causation as there is context within which to frame an appropriate question or hypothesis. Cherry-picking or selectively reporting data can give the same effect; reporting data that supports a desired outcome while ignoring inconvenient data to fir the narrative.

Data can also be fabricated (or hallucinated by AI) in order to “improve the significance” of a dataset. “Fudging data” in this way is incredibly irresponsible and if found out, can lead to published papers being retracted, impacting the reputations of not only the journals and the authors, but of academic publication as a whole. - Unintentional statistical misconduct can come in the form of flawed experimental design. Poor experimental and method design can make statistical analysis difficult to execute correctly due to the introduction of unnecessary and inconsistent variables across repeat experiments, lack of control groups, or inappropriately small sample sizes which would not meet the standard assumptions of many statistical tests.

Too much pressure is put on scientists to produce significant data, which can lead to misrepresentation of p-values and “p-hacking”, which is when a number of different statistical tests are used to analyse a particular dataset, and the one that gives the lowest p-value is then used to describe the data, whether or not it is the most appropriate test to use. In an effort to make results appear significant when they are not, phrases are often used such as “this result is approaching significance”. This is a red flag when reading a paper because p-values are either significant or they are not. If the p-value does not meet the significance threshold then there is no probable reason to assume the data being described is anything other than noise, so to describe a less noisy result as “approaching significance” is simply disingenuous.

What is good practice in statistics?

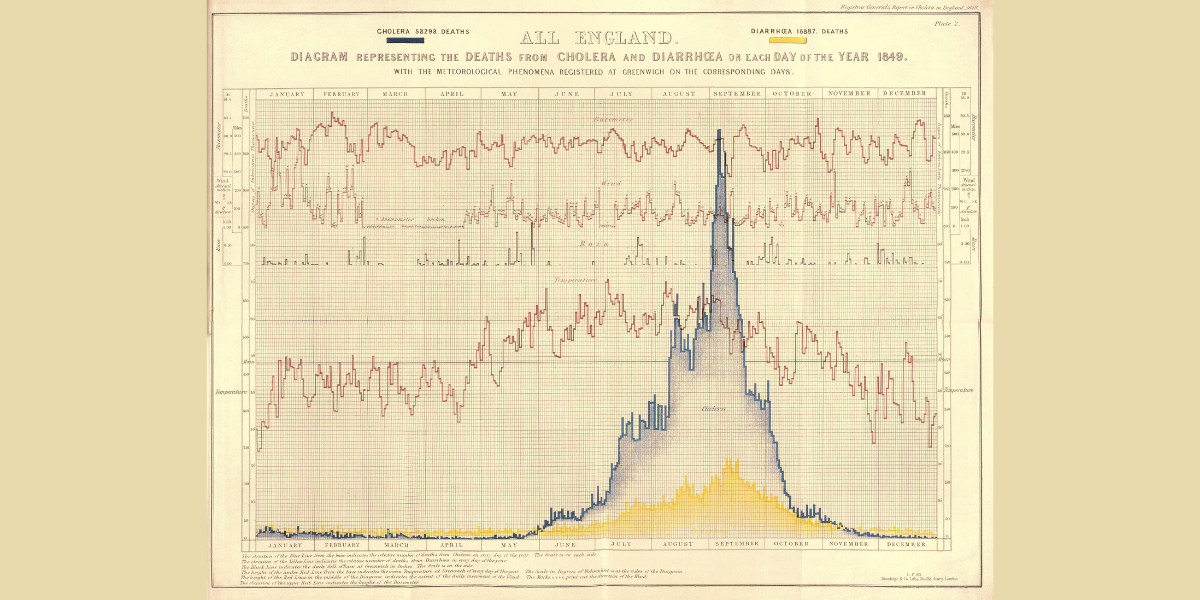

One of the basic steps in responsible statistical analysis is to report all outcomes present in the dataset, not just the ones that support the hypothesis. Presenting the data visually is also good practice as it gives context to what the statistics are describing; for example, scatter plots are much more informative visually, than a table of data with a p-value tacked on.

Practicing the development of a clear and defined experimental design, including the statistical analysis methods intended for use, allows for the collection of data in a way that makes statistical analysis as straight-forward and simple as possible. Ensuring the data is collected in a responsible way and preventing p-hacking. This practice also encourages the reporting of null results which are just as valid as data that produces significant results.

When choosing a statistical test to use, it is vital that the data meets the assumptions required for the test to be appropriate. Checking these assumptions is good practice and allows the researcher to analyse the data in the way that is most appropriate.

Paperstars presents a place to practice reviewing the statistical analysis conducted in published scientific works; to assess how appropriate the analyses and to discuss the impact the results of the statistical tests have had on the landscape of scientific research in a particular field.

One of the reasons we at Paperstars value open/raw data in our rating system is because it allows for readers to explore the statistical possibilities themselves, which holds the authors accountable for good statistical practice through independent verification. This transparency is crucial for the long-term reproducibility of the research and creates a culture of trust in academic publishing and science in general.